Section 1

Putting it All Together: Predicting Q-Day

Relying solely on physical qubit count to estimate progress towards Q-Day is insufficient. Today’s quantum computers score far below any reasonable threshold of cryptographic relevance. But the predominant effort to develop a fault-tolerant quantum computer in recent years has not been focused on scaling the raw number of physical qubits. It’s about creating the conditions (via better error correcting codes, control stack, and system integration) to enable scaling up the number of physical qubits trivially.

Thus, criticisms of quantum computing along the lines of “they can’t factor 21 yet” are a red herring. Because once the foundation is solid on Layers 1–4, scaling up the physical qubits to factor cryptographically relevant numbers may potentially be the “easy” part. But while these scaling challenges are not fundamental barriers, they represent engineering unknowns that make extrapolating from today’s small-scale demonstrations to fully fault-tolerant systems running thousands of qubits over days and weeks is genuinely uncertain.1

Fundamentally, the latest generation of quantum computers being designed today have the potential to run circuits of much greater volume and potentially reach the scale of cryptographic relevance by:

- Fabrication and integration of an increasing number of physical qubits into the system.

- Physical qubits that have lower baseline error rates, requiring less error correction, resulting in a higher logical qubit capacity (LQC). See Appendix B for a detailed treatment of the various physical modalities/types of qubits.

- More efficient quantum error correction (QEC) techniques to more powerfully and efficiently mitigate the negative impact of noise during the operation of a quantum computer that increase the logical operations budget (LOB).

- More robust and streamlined control planes to enable a more resilient quantum-classical interface that enables a higher quantum operations throughput (QOT).

- Better cryptanalytic algorithms specific to ECC as well as hybrid classical-quantum approaches that reduce the overall logical compute capacity (LCC) requirements to reach the threshold of cryptographic relevance.

Once someone has built a quantum computer with sufficient LCC to run a given algorithm, we’ve arrived at Q-Day.

Estimating Q-Day: Three Approaches

To estimate exactly when that may occur, we take three different approaches:

Survey of Experts

Expert surveys of quantum computing researchers — tends to skew more pessimistic/uncertain, reflecting researchers' awareness of remaining engineering challenges.

Published Roadmaps

Publicly announced roadmaps from leading quantum computing companies across all major hardware modalities — tends to skew more optimistic.

Bottom-Up Analysis

A simplified model simulating progress for both "supply" (quantum computing capability in terms of LCC) and "demand" (algorithm parameters) — scenarios range from optimistic to pessimistic.

1. Survey of Experts

Survey of Experts

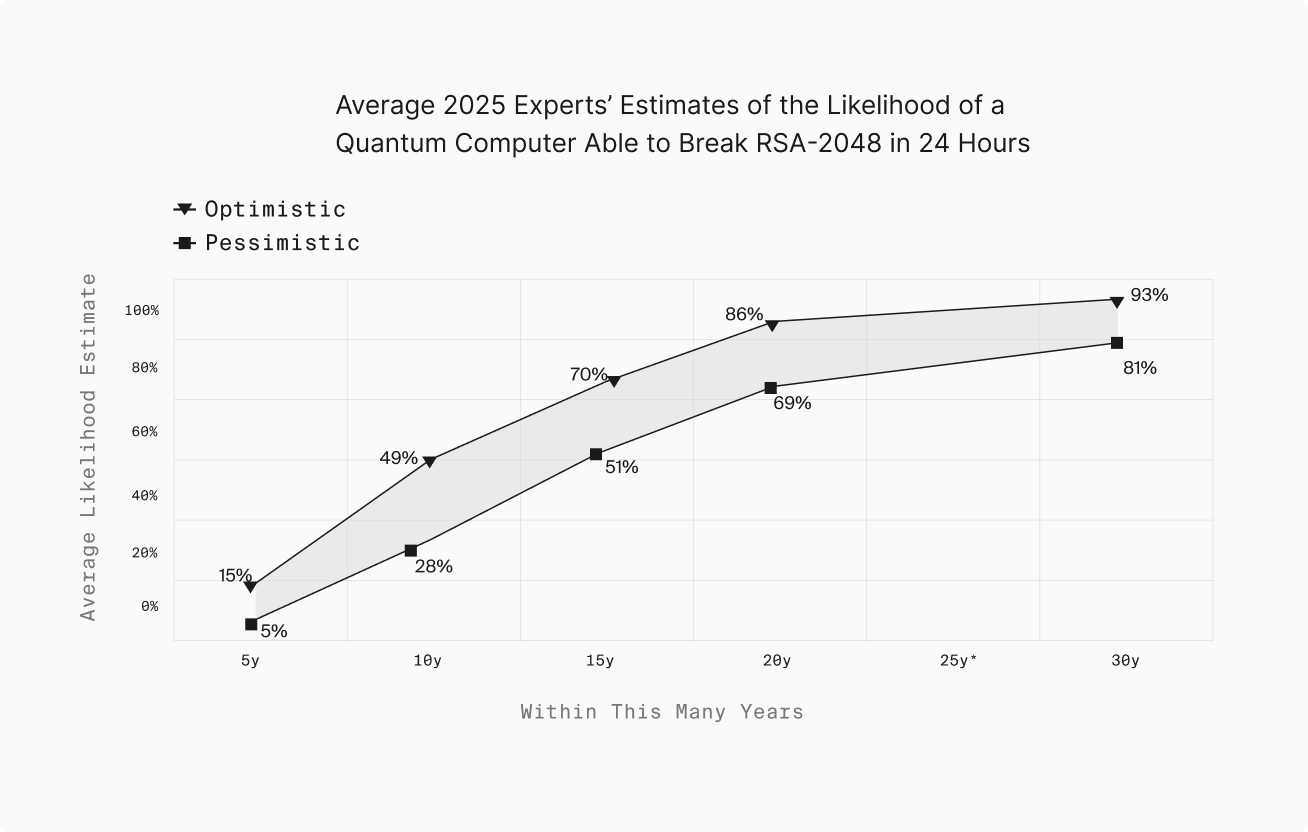

The 2025 Quantum Threat Timeline Report by Michele Mosca and Marco Piani surveys leading experts in quantum computing to estimate when a cryptographically relevant quantum computer (CRQC) capable of breaking widely used public-key cryptography may emerge. The report gathers responses from researchers and industry leaders and analyzes their expectations about progress in hardware, error correction, and algorithmic improvements. Its central focus is the timeline for a quantum computer capable of running algorithms such as Shor’s algorithm at a scale sufficient to break systems like RSA.

The survey results suggest that experts believe a CRQC is plausible within a few decades, though there remains significant uncertainty, with the report presenting probability estimates that such a machine could appear by roughly the 2030s–2040s, with some respondents assigning non-negligible probability to earlier breakthroughs. Much of the uncertainty arises from open questions around scaling hardware, improving qubit fidelity, and implementing large-scale quantum error correction. Respondents also highlighted important milestones on the path to a CRQC, including demonstrating stable logical qubits, improving physical gate fidelities, and scaling systems to thousands or millions of qubits.

2. Published Roadmaps (& Physical Qubit Milestones)

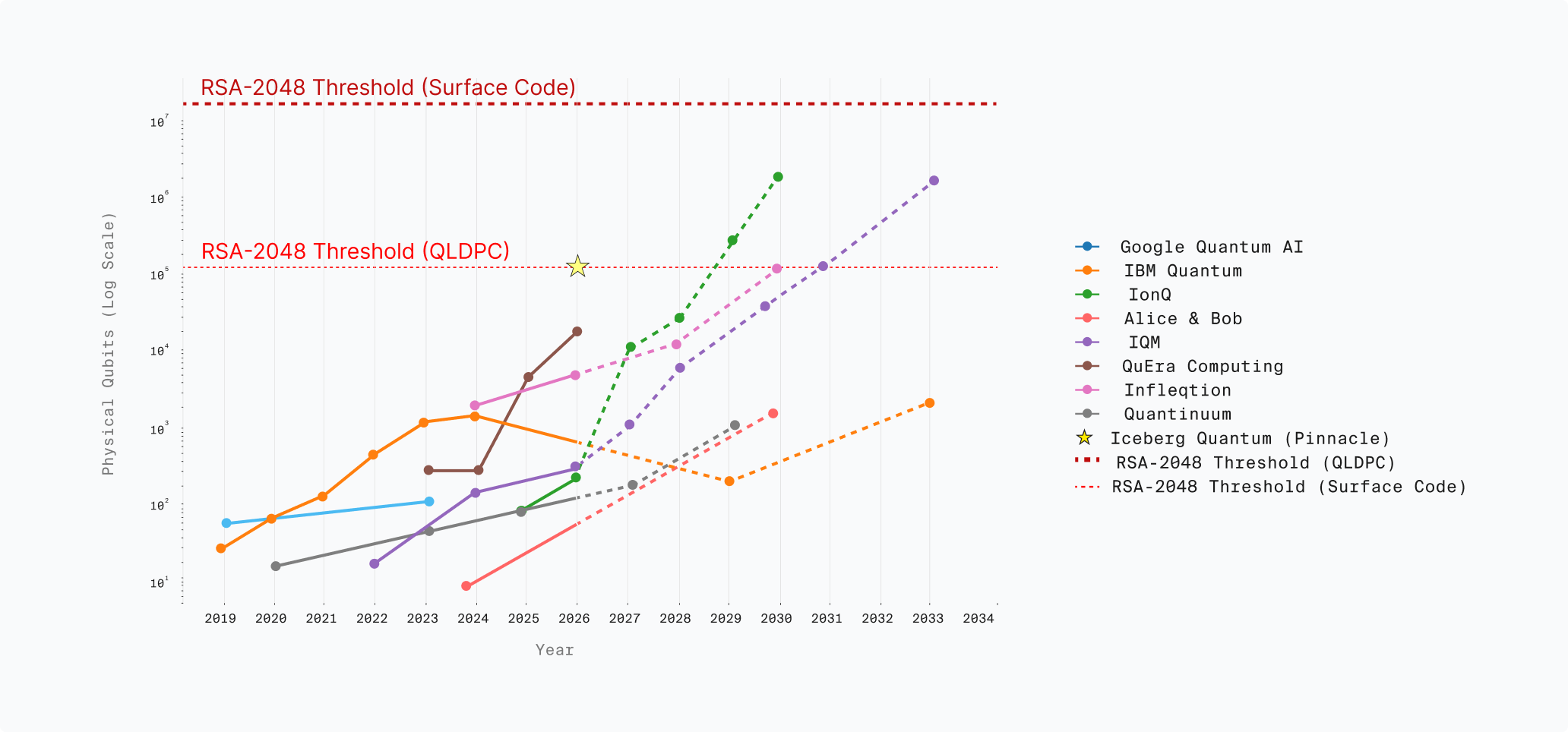

Physical Qubits vs RSA-2048 Thresholds

Published Company Roadmaps

The following table summarizes publicly announced roadmaps from the leading quantum computing companies across all major hardware modalities. This is probably the most optimistic estimate, as it comes from the companies building CRQCs themselves.

However, multiple companies project reaching the threshold of cryptographic relevance before the decade is out. And it should be noted that the latest resource estimates for elliptic curve algorithms (such as ECDSA over secp256k1 used in Bitcoin) are easier for a quantum computer to break than RSA, although significant engineering challenges remain to actually realize a real-time control stack that can accommodate the latest high-rate error correcting codes used in those constructions.

Key observations

- IBM’s physical qubit count decreases post-2024 due to a focus on decreased error rates — fewer but more error-resistant qubits.

- Companies targeting qLDPC codes project significantly lower physical qubit thresholds than those using surface codes.

- ECC-256 (used in Bitcoin/Ethereum) has a lower resource threshold than RSA-2048, meaning Q-Day for blockchains may arrive earlier than RSA-focused estimates suggest.

3. Bottom-Up Analysis

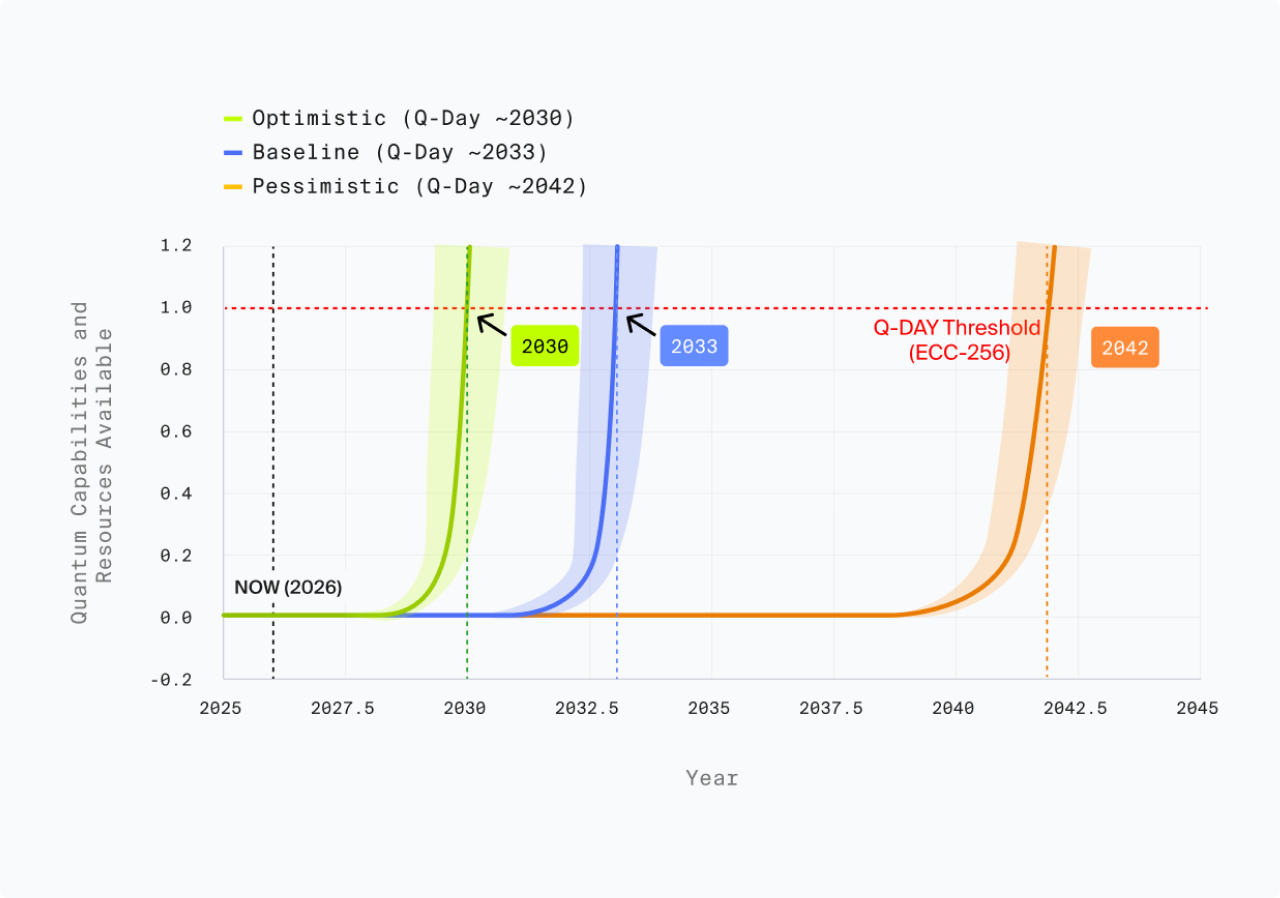

Utilizing a simplified model to simulate progress for both the “supply” (quantum computing capability in terms of LCC) and “demand” (algorithm parameters), we can simulate various scenarios for how quantum computing scales. The full parameters for this model, assumptions, and definitions are detailed in Appendix E. Note that this is not a full resource estimate, but the parameters are based on (and the results are largely consistent with) Gidney’s 2025 resource estimate [6].

Based on known parameters, historical trends and scenario analysis with different future growth trajectories, we estimate that Q-Day is likely to occur within the next 4 (optimistic case) to 16 years (pessimistic case). Note that this model assumes no major breakthroughs, only relatively modest year-over-year improvement. If qubit quality were to suddenly drop by another order of magnitude, then it’s possible the world will face a Q-Day scenario even before 2030.2

| Parameter | 2021 Historical | Baseline (2026) | Pessimistic → 2042 | Moderate → 2033 | Optimistic → 2030 |

|---|---|---|---|---|---|

| Physical Qubit Count | 1,000 | 3,000 | +1.5× / year | +2× / year | +3× / year |

| Qubit Quality (2Q Gates) | 99.5% | 99.95% | +5% / year | +10% / year | +15% / year |

| Error Correction Efficiency | No below-threshold demonstrated | Surface code (x² overhead) | +2% / year | +5% / year | +8% / year |

| Control Overhead | N/A | 80% LQC overhead; 1.5× circuit width; 3× depth penalty | +2% / year | +5% / year | +8% / year |

| Algorithm Requirements | ~6T ops / 6,000 LQC min | ~5T ops / 1,000 LQC | ±0% reduction | −2.5% / year | −5% / year |

| Q-Day | — | — | 2042 (16 years) | 2033 (7 years) | 2030 (4 years) |

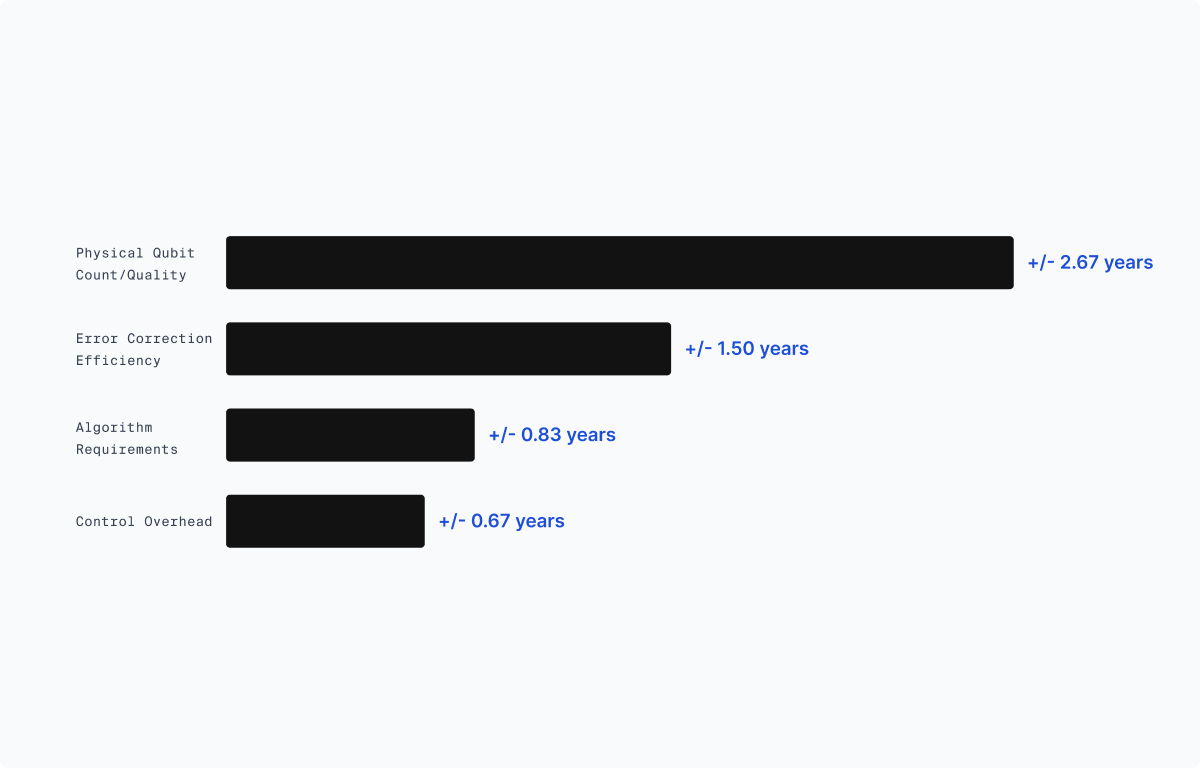

In order to illuminate the relative weight of different factors in our model, below we present a sensitivity analysis based on our simplified four-layer quantum computing framework developed previously.

Sensitivity Analysis: QC Component Impact on Q-Day Timeline

As we can see, in the simplified four-layer model, the dominant determinant of cryptographic relevance is scaling the physical layer: the number and quality of physical qubits. Improvements at the error-correction layer are the next most important, largely because they reduce physical-to-logical overhead. Algorithmic and control-layer improvements remain meaningful, and their relative importance depends on the exact architecture being used.

Note that this model is anchored to Gidney’s 2025 resource estimate, placing a width (LQC) requirement of 1000 logical qubits. As a result, achieving this width becomes the dominant bottleneck; and once logical qubits start to scale, the model rapidly converges on Q-Day.

Project Eleven Q-Day Forecast

Key Takeaways

- Using three distinct methodologies (expert assessment, quantum roadmaps, and our own analysis) we assess that Q-Day is highly likely to occur in the next decade.

- With below-threshold error correction demonstrated, the primary bottleneck to reaching cryptographic relevance is logical circuit width (LQC), which itself is determined by physical qubit number, quality, physical-logical qubit ratio, and algorithmic overhead. Based on the best resource estimates/algorithm variants, we assess that LQC is the dominant variable affecting Q-Day timelines.

- Both expert surveys and our own model are anchored to algorithms targeting RSA-2048; a major unknown factor is whether or not elliptic curve cryptography is as hard as breaking RSA. If not, Q-Day may arrive much earlier than our model suggests.

While these projections offer a useful framework for thinking about progress, they remain highly uncertain for the reasons discussed below.