Section 1

The Path Forward

Since the below-threshold demonstration by Google, quantum computing is no longer a challenge of science, but a challenge of engineering (albeit a significant one). The major open question is now whether the logical regime, demonstrated multiple times at small scales, can be scaled to cryptographic relevance. But the design space for how to address the various scaling bottlenecks is quite large. Moreover, the more quantum computers improve, the faster progress accelerates.

Progress toward a cryptographically relevant quantum computer (CRQC) is unlikely to be linear. Instead, advances will occur in discrete jumps as individual bottlenecks in the stack are removed. At any given time the system is constrained by its weakest component; once that constraint is lifted, the next bottleneck becomes dominant.

At the same time, improvements in different layers of the stack compound multiplicatively. Higher physical gate fidelity reduces the code distance required for fault tolerance, which lowers physical-to-logical overhead and increases the number of available logical qubits. Because these effects reinforce one another, solving a single bottleneck can dramatically expand the feasible computational volume.

Taken together, these dynamics mean that progress toward a CRQC can occur along several independent fronts simultaneously. Improvements in hardware, error correction, and algorithms all interact with one another, and advances in any one layer can dramatically expand the feasible computational regime. As a result, the trajectory toward cryptographic relevance is not defined by a single critical path, but by multiple avenues of parallel progress.

1. Multiple Physical Approaches

Superconducting, trapped ions, neutral atoms, silicon-spin, and photonic-based modalities each have their own unique advantages and challenges. And the development/scaling of each approach is (to some extent) independent of the others.

As a result, quantum progress is not restricted to a single technology or “critical path”. In fact, a major motivation for developing alternatives to the superconducting regime (pioneered by companies like IBM, Rigetti, and Google) was the inherent challenge of scaling. This led to the development of trapped-ion and neutral atom-based approaches. Trapped ions, in particular, have the lowest baseline error rates of any modality, meaning the “workload” of the error correction stack can be somewhat lighter. Meanwhile, neutral atoms, also very stable, feature reconfigurable connectivity, making it easier to apply newer and more efficient error correction techniques. Both of these modalities also have their own control mechanisms, and both favor different error correction regimes.

Newer and more exotic modalities are pushing the boundaries of theoretical possibility even further. The silicon-spin modality takes advantage of existing CMOS fabrication techniques to ensure reliable fabrication of qubits at scale. Meanwhile, photonic-based systems have the potential to run at the speed of superconducting qubits, but at room temperature instead of inside a dilution-refrigeration system.

Each approach also has its challenges. But solving these challenges require distinct approaches, so the space for a potential breakthrough is quite large.

Moreover, these tech trees are not only progressing in parallel; they are increasingly reinforcing one another. Photonic technology, in addition to being a modality in its own right, is also being explored as a means of interconnecting distinct quantum systems, enabling modular heterogeneous architectures that combine the strengths of multiple physical modalities. Q-CTRL’s recent work [12] illustrates how mixing different error-correcting code families and specialized processing units across modules can yield substantial efficiency gains, and DARPA has formalized this direction through its Heterogeneous Architectures for Quantum [HARQ] program. The practical consequence is that progress in any one modality may now compound into progress for the others, broadening the space of possible breakthroughs even further.

2. Error Correction Improvements Compound

Second, because reducing error rates is the fundamental challenge in scaling a cryptographically relevant quantum computer, even small improvements in physical fidelity or code efficiency can have massive downstream effects.

Operating below threshold means that doubling the code distance exponentially reduces logical error rates. But improving the underlying physical error rate also reduces the code distance needed to achieve target logical fidelity. And better error correcting codes that require less overhead have a compounding effect, because error correction is applied at every cycle of the computation. These effects multiply: better physical qubits enable smaller error-correcting codes AND smaller error correcting codes require fewer physical resources.

This feedback loop can massively reduce the cost to run Shor’s algorithm.

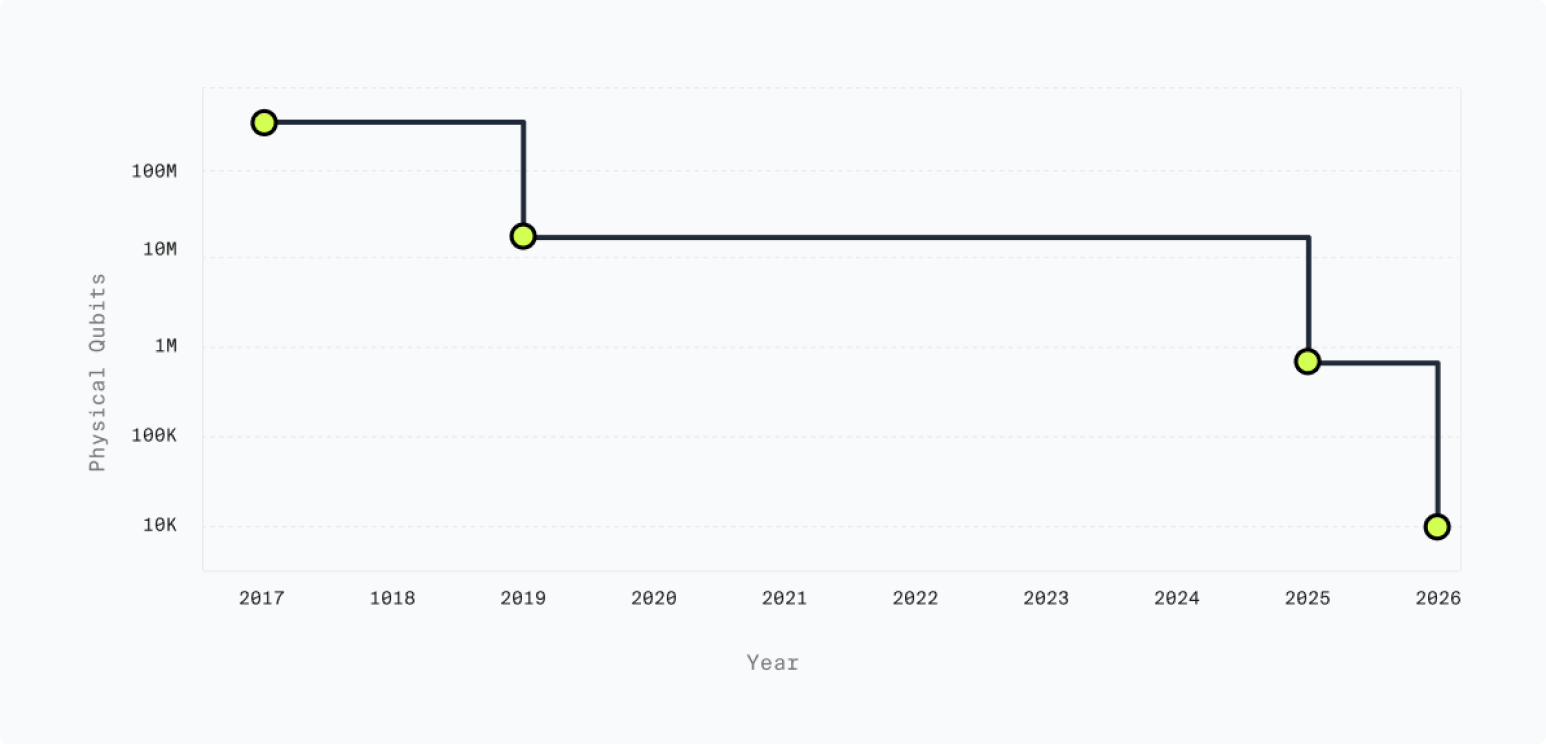

Physical Qubits Required for Shor's Algorithm

This compounding is already evident in the trajectory of resource estimates for breaking RSA-2048 with Shor’s algorithm: a 20× reduction from 20 million to approximately 1 million physical qubits occurred in just four years, driven almost entirely by improvements in error correction efficiency, not in physical hardware. The 2025 Gidney estimate assumes the same physical hardware parameters as 2021 (0.1% gate error rate, 1-microsecond surface code cycle time, nearest-neighbor superconducting grid), demonstrating that better algorithms and error correction techniques alone can collapse resource requirements dramatically. Further research [10] shows that the shift from surface codes to qLDPC codes can yield another order-of-magnitude reduction, bringing the total physical qubit count into a range that multiple hardware platforms are credibly targeting within the next several years.1

Subsequent research published in early 2026 reinforces this trajectory across multiple architectures and code families. In parallel to the Google work [2] cited throughout this report, several architecture-aware proposals using qLDPC and lifted-product codes converge on similar reductions: IonQ’s “Walking Cat” design demonstrates 110 logical qubits in roughly 2,500 physical trapped-ion qubits with a streaming decoder, Q-CTRL’s heterogeneous Q-NEXUS architecture reports a 138x physical-qubit reduction over surface-code baselines under detailed accounting [12], and a neutral-atom proposal from Caltech and Oratomic positions Shor’s algorithm at cryptographically relevant scales using as few as 10,000 reconfigurable atomic qubits with all-to-all connectivity [3]. These works rely on different assumptions, but they describe a consistent picture: qLDPC codes and architecture-aware compilation are dragging the resource floor down across every modality, simultaneously.

Progress in classical machine learning is also now feeding back into the quantum stack itself. Real-time syndrome decoding has long been considered one of the practical bottlenecks for deploying qLDPC codes at scale, and recent work from Harvard demonstrates that a convolutional neural network decoder (“Cascade”) can suppress logical error rates by more than an order of magnitude relative to existing decoders for qLDPC codes, while delivering three to five orders of magnitude higher throughput and meeting real-time latency budgets on several leading hardware platforms [13]. That a key decoder bottleneck appears to yield to a relatively standard deep learning architecture is a useful illustration of how AI infrastructure and the quantum computing stack can complement one another.

3. Algorithmic Optimizations Lower the Bar

Third, progress happens not just in hardware and error correction, but in algorithms themselves. There are multiple independent threads of algorithmic improvement, all of which lower the bar for cryptographic relevance:

-

Circuit-level optimizations: Recent work targeting the Ed25519 curve exploits the isomorphism between Edwards and Weierstrass forms to reduce resource requirements by 75% on the key bottleneck metric and qubit requirements by 12% [14]. These optimizations also extend to NIST-standard prime fields, meaning the improvements apply broadly.

-

Arithmetic optimizations: The introduction of approximate residue arithmetic reduced the number of logical qubits needed from ~6,000 to ~1,730 for RSA-2048 [6]. Subsequent work combined this with logical-layer improvements and improved code constructions to achieve a further 100× reduction in required resources [102].

-

Alternative factoring algorithms: A 2023 proposal for a quantum factoring algorithm with reduced circuit size represents an asymptotic improvement over Shor’s [15]. While the practical advantage over optimized Shor’s remains unclear for current parameters, it represents an additional avenue for future improvement.

These algorithmic breakthroughs effectively lower the bar for what counts as “cryptographically relevant.” While hardware capabilities are climbing, the target is simultaneously getting easier to hit. Progress can happen in both directions, and indeed, it has been.